My top concern when application engineers are going to spend 90%+ time reviewing AI-generated codes in the upcoming years -- the human reviewing speed.

In the real world, someone in the team must take accountability for the correctness and performance of the source code. It doesn't make sense for anyone to make a disclaimer like

This system is generated by AI and might contain errors.

If 99%+ of source code will eventually be generated by LLMs, the human review process becomes critical.

Underestimated the effect of choosing programming languages

I have 8 years of application development experience using strong, statically typed programming languages, including Java, Scala, Swift, Javascript, Typescript, and Kotlin, from before the GenAI era.

For the recent two years, the latest GenAI libraries usually released in Python first. Thus, I have been heavily using LLMs to generate Python code while building and exploring GenAI PoC with my customers across different frameworks like LangChain, LangGraph, Google ADK, Strands Agent, and more.

The longer I read and write Python, the more I miss statically typed programming languages, especially Kotlin and its compiler.

Before the GenAI era, Kotlin has always been my go-to programming language because of its elegant design. Its rich language features enable me to express myself through different forms of abstraction very efficiently. The static typing and type inference help me navigate others' source code effectively.

Now, in GenAI era, LLMs can generate high quality source code for many popular programming languages. The underestimated part is to leverage compilers in Agent tool to guarantee their output has zero type mismatches or syntax errors at compile time.

Code reviewing efficiency

In my 8 years development experience, I was 99.9% of the time able to navigate deeply Java/Kotlin open-source libraries' source code in my IDE and trace the underlying low-level implementations and read source code documentation. This significantly helped build confidence and learn fast when using new libraries.

While with Python, it is less than 50%. It is because some Python implementations use dynamic typing with no type hint or with Union type hint makes it difficult to navigate deeply.

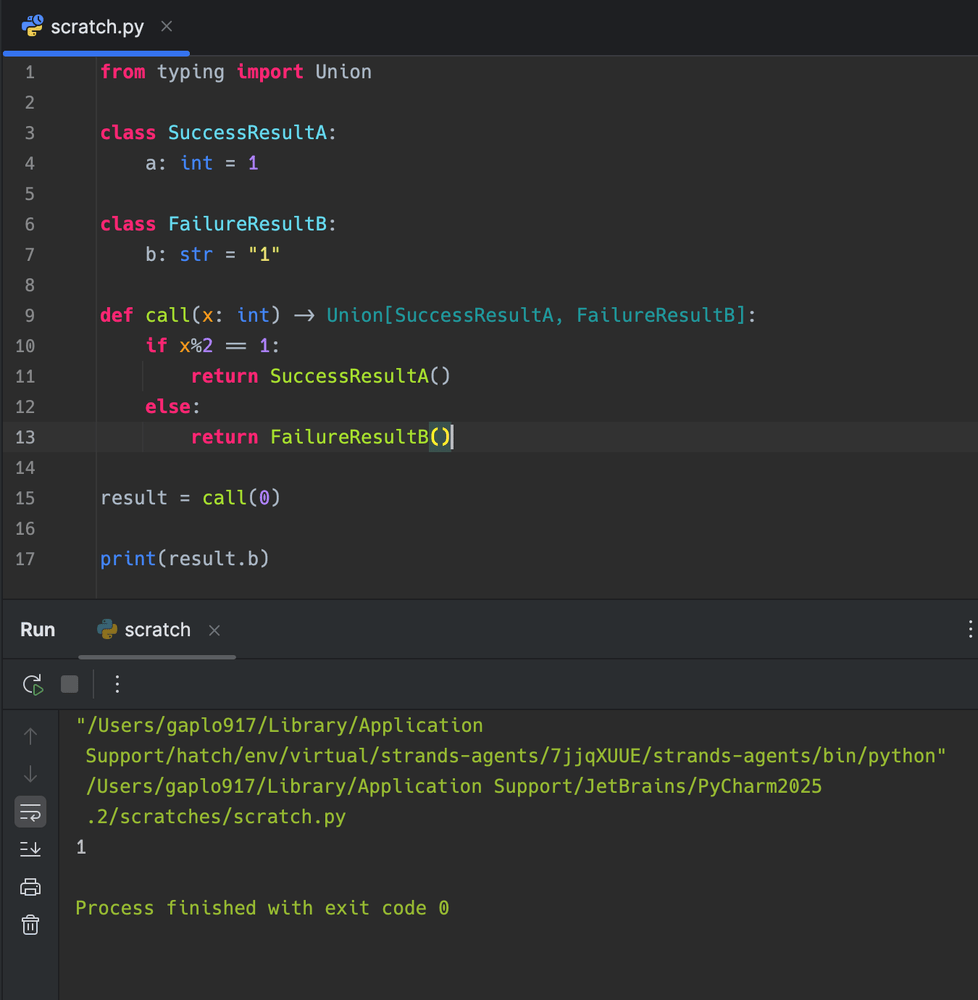

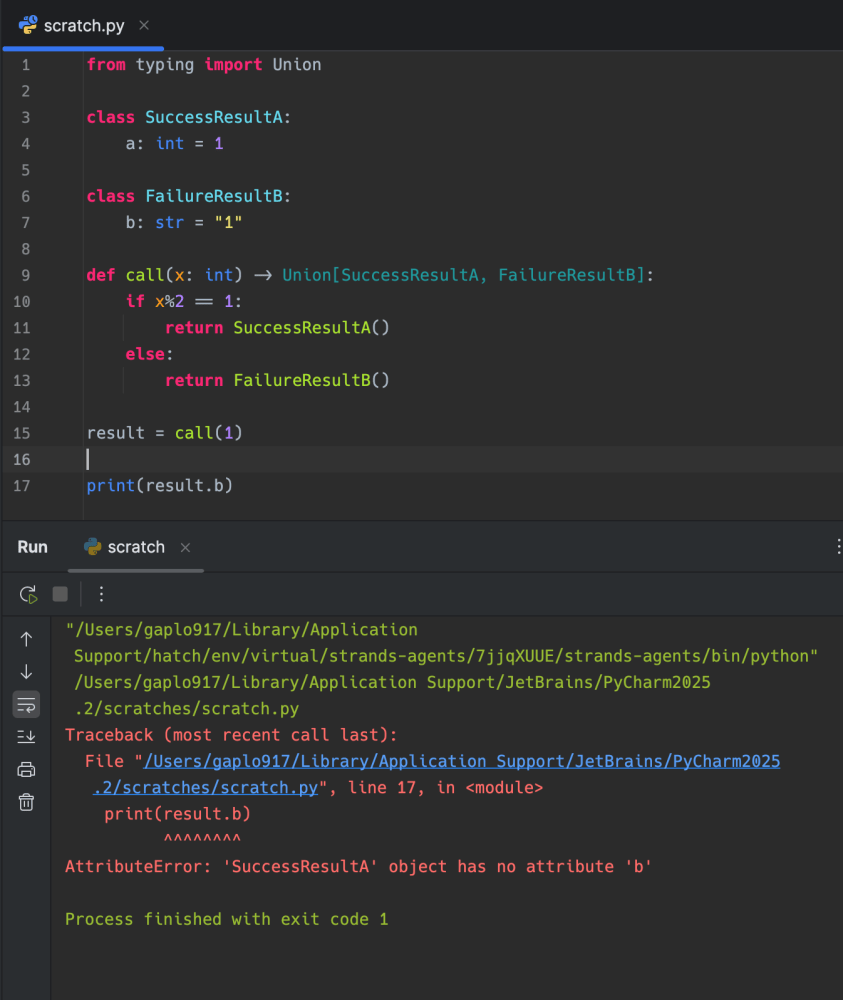

See the common Result type implementation:

Can you spot error during the review?

from typing import Union

class SuccessResultA:

a: int = 1

class FailureResultB:

b: str = "1"

def call(x: int) -> Union[SuccessResultA, FailureResultB]:

if x%2 == 1:

return SuccessResultA()

else:

return FailureResultB()

result = call(0)

print(result.b)Let's run it

As the result type is produced dynamically base on runtime, sometimes the program will works.

How would you review the source code effectively if LLM generate source code with 4 - 10 abstraction layers of union types in Python build from ground up?

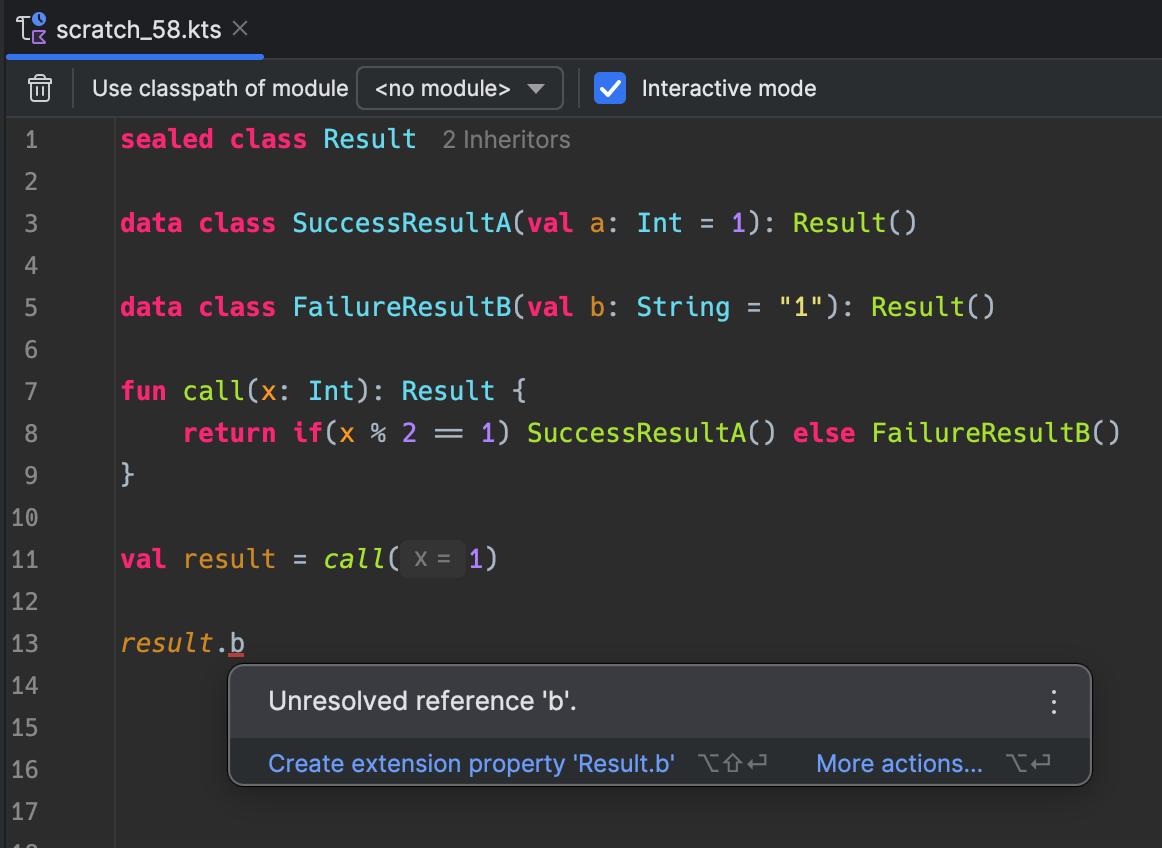

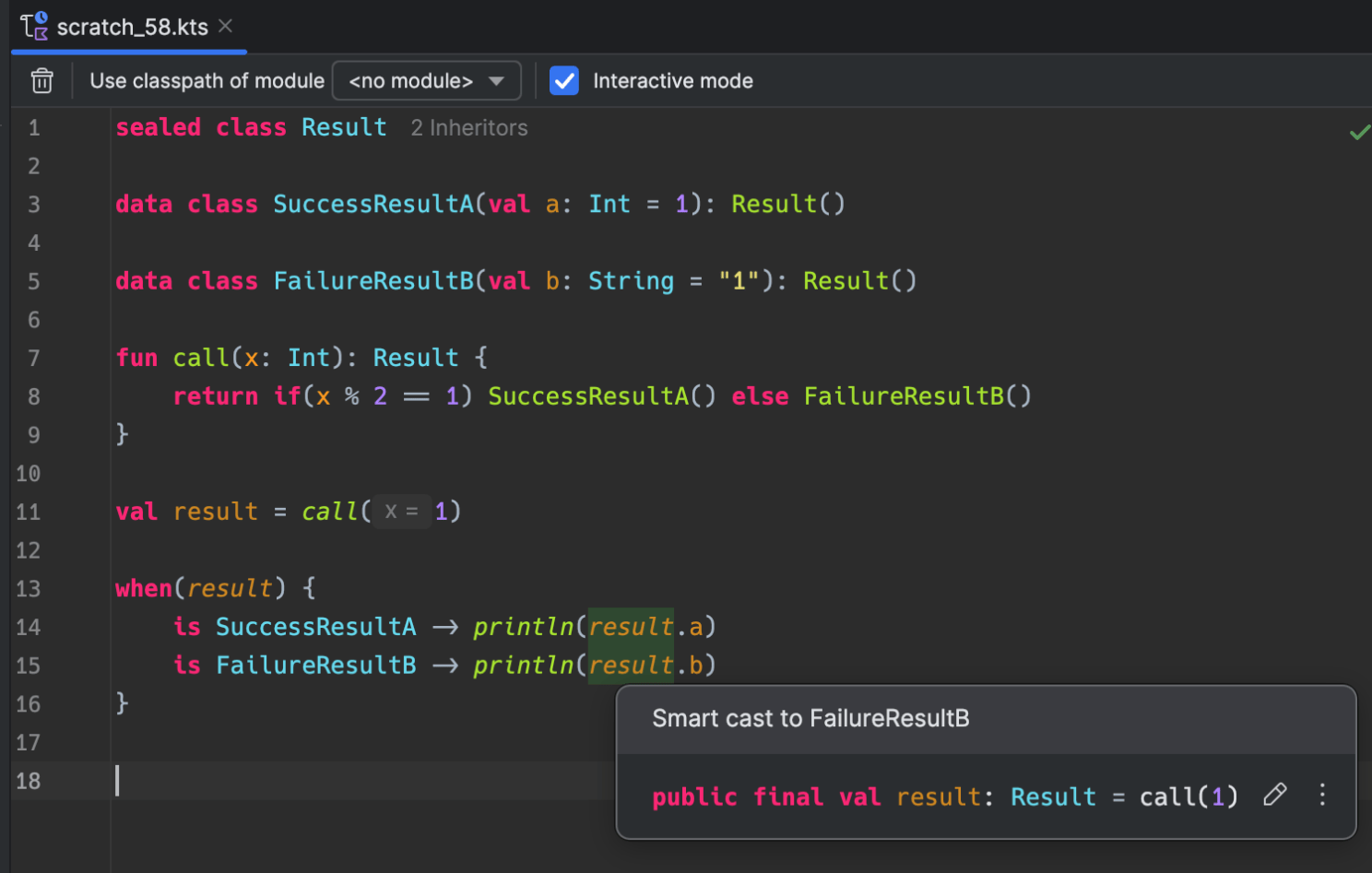

On the contrast, the similar abstraction to Python Union type in Kotlin is sealed class, it is static typing and checked by complier at compile time.

The best part of compile time error is that, it has no runtime dependencies or side effect.

When I am reviewing the source code, I would check and navigate the types. The Kotlin smart cast annotation really shine in this area.

Roughly speaking, when I review the same AI generated source code in Kotlin, the static typing, language conciseness and complier reduces 80% mental cognitive load. The complie-time type errors help to reduce 80% Agentic-back-and-forth time comparing to Python.

Although my current job role is unlikely to involve building complex and long-lived production systems, I hope newcomers to this industry aware the choice of programming languages.

The technology stacks of building throwable GenAI PoCs are completely different from building long-lived applications.

If you are new to this application development industry, I highly recommend you to spend some effort to deep dive into different programming languages with AI, especially on the strong and static typed programming language.

The ultimate goal is to take accountability and review AI generated codes as efficient as possible.

Jupyter Python Notebook is great, now available in Kotlin - Kotlin Notebook

In terms of learning a new language, my first "wow" moment was experiencing Python Jupyter notebooks. These notebooks helped me tremendously in picking up new technologies and allowed for rapid experimentation.

When I discovered that Kotlin Notebook had become freely available and JetBrains is building the latest Kotlin-native AI agent framework (Koog), I started an open-source project to consolidate my Kotlin experiments and share them online.

GitHub repository: https://github.com/gaplo917/awesome-kotlin-notebook

I am trying to build a multi-agent system using A2A protocol with strong static typing in Kotlin running on my M4Max Macbook Pro. It is for my own learning about A2A and the experience is transferrable to Python as well.

Notes: I don't use LLM generate ideas as it is the interesting part of being a human. I use LLM to work on my ideas instead.